- Feb 14, 2024

- 4 min read

The Future of Artificial Intelligence: Threats Facing Businesses and Possible Solutions

This article discusses how AI technologies have been wielded by fraudsters in recent years, what these trends tell us about the future, and how businesses can protect themselves.

Based on Sumsub’s 2023 Identity Fraud Report, AI-powered fraud was the most trending type of attack last year. This meant a tenfold increase in the number of deepfakes detected worldwide from 2022 to 2023.

We at Sumsub prepared this article to explain how companies can confront the spread of AI-generated fraud, and what steps regulators have already taken to mitigate some of the harms.

AI is both a threat and a solution

There are two sides to AI, as it can be used both by businesses and criminals.

On the one hand, the misuse of AI contributes to the spread of misinformation and facilitates fraud. This is made worse by the fact that everyone can now generate fake photos, videos, and audios in a manner indistinguishable to the naked eye.

Recently, an AI-generated audio recording impersonated two Slovakian politicians discussing how to rig the local elections. The fake audio clip was posted on Facebook and became viral on the eve of the Slovakian election, having a likely impact on the outcome. This is a clear example of the broader societal consequences malicious deepfakes can have.

When it comes to AI-powered fraud, the only way to fight fire is with fire. That’s why the business world is also adopting AI to verify identities with biometrics, reducing the chances of fraud. AI, in this case, becomes the answer to AI-powered fraud, as it can analyze vast datasets, identifying unusual patterns and anomalies that may indicate fraudulent activity.

But even if businesses beef up their defenses with AI-powered solutions, regulations are still necessary to keep the playing field safe for everyone.

Regulatory framework

As the AI boom continues, regulators are joining the hype. New regulations are constantly being introduced, however existing regulatory frameworks often don’t fit the bill, creating legislative challenges.

China

China has been particularly active in regulating AI, enacting regulations at a surprising speed.

The country has approved at least three major AI regulations: “Administrative Measures for Generative Artificial Intelligence Services”, “Internet Information Service Algorithmic Recommendation Management Provisions” and the "Regulations on the Administration of Deep Synthesis of Internet Information Services”, which is the first of its kind to include:

- The need for users to give consent to their image to be used in any deep synthesis technology

- Prohibition of deep synthesis services to be used for disseminating fake news

- The need for deepfake services to authenticate the real identity of users

- The need for synthetic content to signal that the image or video has been altered with technology.

The Chinese approach to AI regulations generally follows a vertical strategy, where the country regulates one AI application at a time. For 2024, China will not only enforce existing regulations, but also promises to dive into even trickier topics, such as “black boxes”.

EU

The European Union follows a different approach than China, approving a comprehensive, horizontal, cross-sector regulation of AI systems and models in 2024: The EU AI Act (AIA).

The AIA follows a risk-based approach, where certain AI applications are prohibited while other applications, labeled as “high-risk”, are subject to a handful of requirements on governance, risk management, transparency, among others. However, the AIA is not the only regulatory initiative in the EU focusing on AI.

The AI “regulatory package” also includes the Revised Product Liability Directive, which enables civil compensation claims against manufacturers for harm caused by defective products embedded with AI systems, and the AI Liability Directive, which allows for non-contractual compensation claims against any person (providers, developers, users) for harm caused by AI systems which is due to the fault or omission of that person.

The EU already has two recent laws including the Digital Markets Act and the Digital Services Act, which requires that recommendation algorithms from certain platforms go through risk assessments and third-party audits.

UK

The UK has adopted a less ‘hands-on’ approach when it comes to AI regulation, opting for what the government has referred to as a “business friendly” approach.

In 2023, the UK released its AI White Paper—a set of guidelines on how AI can be developed in a safe and trustworthy manner. Upon calls from parliament for a more assertive approach towards AI governance, the UK presented its AI (Regulation) Bill, which, among other things, implements some of the points established under the AI White Paper.

The UK strategy towards AI also calls attention to:

- The sectoral approach, through which the government delegates the task of regulating AI to already existing regulatory agencies

- A focus on AI safety, more specifically the existential risks AI might present, as evidenced by the UK AI Summit and the subsequent “Bletchley Declaration”

US

The US has a tapestry of AI regulations. States have taken initiatives of their own, sometimes without regard to the federal approach to the topic. This can be seen in the emergence of state-level deepfake regulations in the absence of related federal legislation. Virginia law is centered on pornographic deep fakes, while California and Texas laws cover deepfakes and elections.

In 2023, there were many attempts to regulate the use of AI by federal agencies. While these do not apply directly to private entities, there is the expectation that the requirements in these policies will eventually impact the private sector too, given that private companies are the main technology providers to public organizations. The best example of this is the acclaimed Biden-Harris Administration Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence.

Apart from this, the US already has sound l guidelines for AI development and use, such as the NIST Risk Management Framework. Whereas the NIST RMF is voluntary, adherence to it by private companies signals a commitment to market best practices.

What’s next?

In the near future, we should expect more countries to implement regulations on AI systems and models. The EU AI Act is expected to evolve into an international benchmark, influencing jurisdictions around the world. In the US, we should keep an eye on the next developments in the NIST, which should continue to issue important guidelines and standards.

Other countries will join the race as well, in addition to International Treaties on AI coming sooner rather than later. For example, the EU AI Pact, which aims to prepare companies for the upcoming implementation of the EU Act, convenes both EU and non-EU industry players. Similar instances may occur in the future.

From a business point of view, companies need to start adapting to existing frameworks and guidelines around AI governance, ethics, and risk management, while keeping an eye on incoming regulatory requirements. A good example to follow is the ISO IEC Standard 42001:2023, which sets out a list of requirements for the establishment of an AI Management System.

Relevant articles

- Article

- 3 weeks ago

- 12 min read

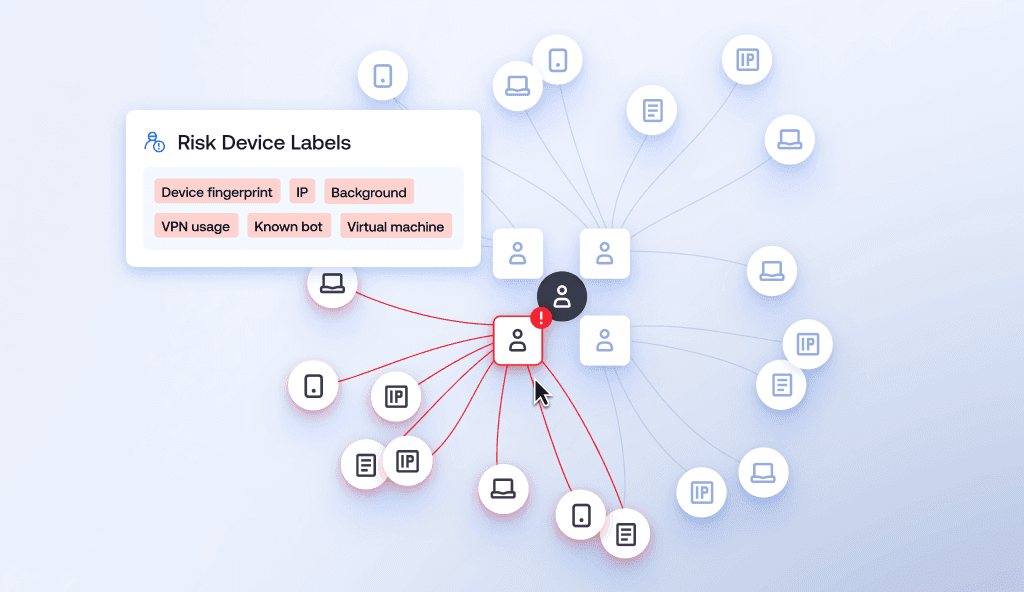

Learn how device intelligence assesses device risk in real time using technical signals and device fingerprinting—without disrupting the customer exp…

- Article

- 2 days ago

- 9 min read

Learn what AI recommendation poisoning is, how it threatens business decision-making, and ways to protect against it.

What is Sumsub anyway?

Not everyone loves compliance—but we do. Sumsub helps businesses verify users, prevent fraud, and meet regulatory requirements anywhere in the world, without compromises. From neobanks to mobility apps, we make sure honest users get in, and bad actors stay out.