- Oct 31, 2023

- 4 min read

The Dark Side of Deepfakes: A Halloween Horror Story

Learn how fraudsters can turn you into a deepfake and how to protect yourself.

A woman opened a link sent to her by email to see herself in a porn video with her real name in the title. The video looked real, but it wasn’t actually her—it was a deepfake. Sharing her story with Elle Magazine, the woman said: “Some pictures even had lengthy and detailed descriptions of me. One stated who my childhood best friend was, another my name and finally one, I discovered to my absolute horror, even shared my home address.”

In another case, two grandparents received a call from who they thought was their grandson. He said he was in jail, with no wallet or cellphone, and needed cash for bail. The grandmother, a 73-year old woman, scrambled to do whatever she could to help, withdrawing all the cash she could from the bank. Later on, she learned that her grandson’s voice was a deepfake and it was all a scam.

Depression, anxiety, financial losses, ruined reputation, cyberbullying—these are just a few of the consequences that deepfakes can have. What’s more, deepfakes have been on the rise for the last several years, with multiple face swap tools widely available, including Midjourney and AI filters on TikTok. Of course, there are certain cases when deepfakes can be detected in time. For instance, companies can employ Liveness Detection to reliably ensure that customers are truly present and genuine. However, this is a relatively narrow use case.

So what happens when deepfake technology falls into the wrong hands?

Deepfake porn

For years, AI has been systematically used to abuse and harass women. Machine learning (ML) algorithms can now insert women’s images into porn videos without their permission—and, today, deepfake porn is endemic.

An anonymous researcher of non-consensual deepfake porn recently shared an analysis with WIRED, detailing how widespread such videos have become. So far in 2023, 113,000 deepfake porn videos were uploaded to the internet sources shown in the search engines—that’s a 54 percent increase over the 73,000 videos uploaded in all of 2022. The research also forecasts that, by the end of 2023, “the total number of deepfake porn videos will be more than the total number of every other year combined.”

The spread of deepfake porn is made worse by the fact that widely available platforms, including Google, Cloudflare, and Twitch, are used for distribution. Meanwhile, the consequences for victims are severe, including depression, self-injury, and suicide.

Information war and propaganda

Deepfakes are also used to manipulate public opinion and spread disinformation. This can include realistic videos or audio recordings portraying public figures saying or doing things they never have. Deepfake disinformation is often released during critical moments, such as elections and armed conflict, to fan political flames.

Manipulated media can polarize communities and irreversibly damage the reputations of media sources, politicians, or even entire nations. And even if it’s proven that certain content was disinformation later on, the damage is often irreversible—and it’s difficult, if not impossible, for harmed reputations to be restored.

Romance scams

Deepfakes are also used in romance scams to create fake personas used for deceiving victims into forming emotional connections online.

Scammers use deepfake technology to present themselves as an attractive person seeking romance. This is to gain the victim’s trust, and eventually manipulate them into sending money or divulging personal information. Just recently, a woman from the US state of Utah, was convicted in an online romance scam that cost her victims over $6 million.

Of course, an attractive deepfake photo isn’t enough to get the job done. Romance scammers are usually skillful manipulators who use social engineering techniques to get what they want. Therefore, it’s essential to be skeptical when an unknown person approaches you online for any reason.

Voice deepfakes

AI voice-generating apps can analyze what makes a person’s voice unique—including age, gender, and accent—and then re-create the pitch, timbre, and individual sounds of any person’s voice.

Voice deepfakes are exploited by fraudsters in various ways, including:

- Impersonating someone's voice, such as a family member or colleague of a victim, convincing them to reveal sensitive information or, most often, send funds

- Creating fake customer service recordings that sound like legitimate companies to deceive people into sharing personal data or making financial transactions

- Impersonating public or well-known figures to spread false information or commit financial scams.

According to Pindrop, a voice interaction tech company, most voice deepfake attacks target credit card service call centers.

Another major vector of attack involves targeting individual CEOs and other major stakeholders, who can be duped into making significant financial transactions through voice deepfakes.

Advertising and movies

Deepfakes are increasingly used in advertising and movies for various purposes. In the film industry, deepfakes can be used for visual effects, enabling filmmakers to recreate younger versions of actors or bring deceased actors back to the screen.

In advertising, companies use deepfake technology to insert celebrities' faces onto the bodies of models to promote their products, creating more attention-grabbing and persuasive content.

However, things can go seriously wrong when such methods are employed without the actor’s consent. These deepfakes can not only damage the careers of actors impersonated without their consent, but also pose a risk to the credibility and authenticity of the entertainment and advertising industries.

How can we spot deepfakes and ensure our photos aren’t used by scammers?

While there is no way to completely eliminate the risk of deepfake abuse, there are preventative measures that you can take:

- Be skeptical if a seemingly “attractive” or “famous” person suddenly approaches you online for any reason

- If you suddenly receive a call or a video message from a relative asking for money or sensitive information, double check it with them using other messengers

- Examine images thoroughly. When spotting deepfakes, the devil is in the details, and you’ll often be able to recognize one by the wrong direction of light and shadows on the face, inconsistencies in facial features, blurriness or distortions around the edges of the face, hair, or objects in the image, noise unnatural backgrounds, etc. You can also check the photo's metadata to see if it has been manipulated or altered in a software. In a video, deepfakes may not include natural blinking or breathing movements

- Be cautious about sharing personal photos online. You can limit the number of personal photos you share on social media, or adjust privacy settings to restrict who can access your photos

- Avoid sharing high-resolution, unedited photos that could be easily manipulated

- Thoroughly read the terms and conditions of all AI apps, ensuring they cannot use your photos after your use of the app itself

- Depending on the nature of the content, contact local law enforcement agencies when your photos or videos have been stolen and/or used in an inappropriate manner.

Relevant articles

- Article

- 2 weeks ago

- 12 min read

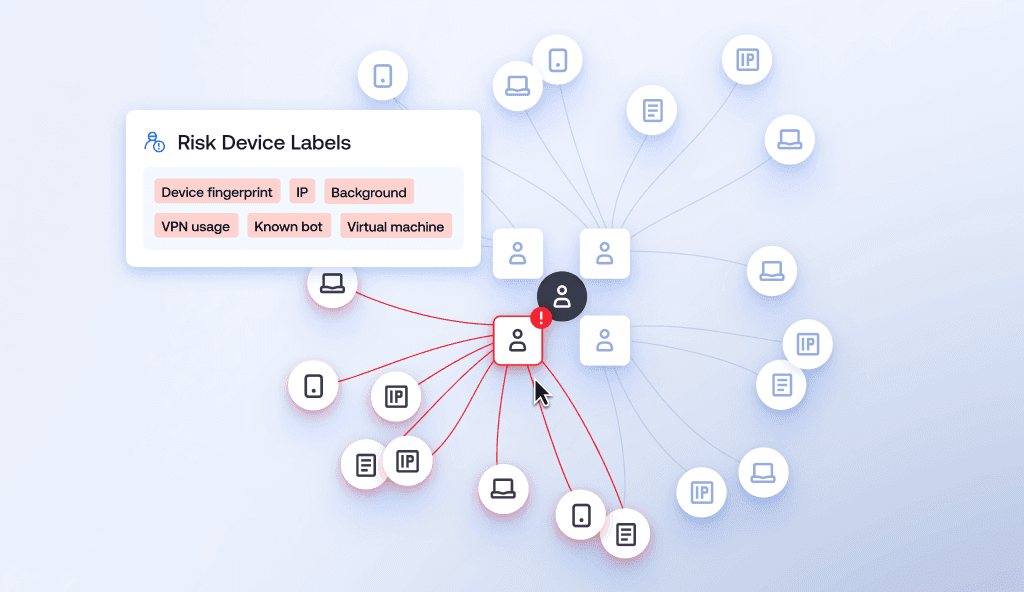

Learn how device intelligence assesses device risk in real time using technical signals and device fingerprinting—without disrupting the customer exp…

- Article

- 3 weeks ago

- 17 min read

What is Sumsub anyway?

Not everyone loves compliance—but we do. Sumsub helps businesses verify users, prevent fraud, and meet regulatory requirements anywhere in the world, without compromises. From neobanks to mobility apps, we make sure honest users get in, and bad actors stay out.