- Apr 15, 2026

- 15 min read

Fraud Trends 2026: AI Scams, Deepfakes, and Emerging Threats

Discover the top fraud trends for 2026: AI deepfakes, pig butchering, mobile payment attacks, and proven strategies to keep your business safe.

When an American couple received a call from their “grandson” asking for help, they didn’t hesitate to act. The caller claimed he was in jail without his phone or wallet following a car crash and urgently needed money for bail. The grandmother rushed to the bank and took out as much cash as she could. Only later did she learn it was a scam—the voice on the call had been a deepfake, and that they had been victims of an impersonation scam.

In 2026, AI scams are everywhere, with deepfakes now accounting for 11% of global fraudulent activity. However, deepfake fraud is just one part of a broader wave of new, sophisticated threats to watch out for this year.

In this article, we explore the most dangerous fraud trends and what both businesses and users can do to mitigate the risks through effective identity fraud prevention.

The 2026 fraud landscape: Stats and key shifts

More than $12.5 billion was lost to fraudsters in 2024, a 25% increase on the previous year. This should come as no surprise to those who understand how scammers’ tactics have evolved in recent years.

Fraudsters are no longer just sending phishing links that end up in your spam folder. They’re using recent breakthroughs in AI tech to laser-focus on vulnerable gaps across all sectors, wherever they're found. For example, in the United Kingdom, according to the Sumsub Identity Fraud Report 2025, deepfake attempts increased by 94%, indicating that while overall fraud remains relatively flat, sophistication is on the rise.

Current fraud trends indicate that criminals are exploiting these advances to make their attacks more sophisticated, personalized, and automated than ever.

These fraud trends should raise alarm bells across the industry, as they are making fraud easier for criminals and harder for businesses to spot and stop.

What’s really behind fraud rates in 2026

While fraud rates actually declined slightly in 2025 compared to 2024, a worrying new fraud trend suggests the dangers are increasing. This is because identity fraud is undergoing a ‘sophistication shift’, according to Sumsub’s 2025-2026 Identity Fraud Report. Current fraud trends show a 180% increase in ‘sophisticated fraud’ compared to 2024. This type of advanced identity fraud uses enhanced deception techniques, social engineering, and AI-generated identities to circumvent fraud prevention systems.

Developments in technology used by fraudsters, such as AI tools and autonomous fraud agents, have made it harder for businesses to fight back. Their sale by fraud-as-a-service providers has also meant that even unsophisticated criminals now have access to highly effective fraud techniques.

The global nature of fraud also presents challenges for fraud prevention efforts. Fraudsters can potentially target people and businesses from anywhere in the world, making them harder to identify, investigate, and prosecute. Additionally, payment details stolen in one country can be used in another, where fraud protections may be less robust.

In the field of fraud management, trends like these are being closely watched. Protection mechanisms must become even more sophisticated than the criminals’ methods and tricks.

Suggested read: Fraud-as-a-Service: How $20 Can Cause Millions in Damage

AI-driven fraud: Deepfakes, malware, AI agents, and beyond

AI scams typically involve sophisticated techniques such as synthetic identity fraud and deepfakes. At the same time, traditional tactics like phishing and vishing are becoming even more dangerous as AI enables highly personalized, scalable attacks. Together, these threats make fraud prevention much harder.

Deepfake scams: Video, audio, and photo attacks

In 2026, deepfakes are mainstream. AI has long been part of the fraud conversation. In earlier Sumsub Identity Fraud Reports, we discussed deepfakes and generative tools as new weapons in the fraudster’s toolkit. But this year, the story is no longer about a disjointed tool here or there—it’s about an entire AI ecosystem that industrializes fraud. Models that generate documents, voices, or videos are now paired with automation and fraud-as-a-service marketplaces.

Deepfake scams often deliberately target vulnerable people and exploit people’s very worst fears for financial gain. Like the Florida mother who was conned out of $15,000 in July 2025 by an AI voice scam after she received a call that appeared to be from her daughter, claiming she had been arrested after a serious car accident. Someone claiming to be a lawyer then told the woman her daughter needed $15,000 for bail, which the mother paid in cash before a request for further funds caused another relative to suspect foul play.

While users should stay alert to AI threats and deepfake fraud trends in banking and other vulnerable industries, businesses can play an important role in reducing these risks by:

- Investing more resources in educating users about the risks of fraud

- Using Digital Risk Protection services and deepfake detection tools to stop fake advertisements and the use of your brand or name.

However, deepfake scams are just the tip of the iceberg. AI has dramatically expanded the range, scale, and believability of fraud, creating new difficulties for fraud detection and prevention.

Suggested read: How to Stay Ahead of Deepfake Evolution in 2026

Agentic AI and automated fraud campaigns

AI fraud agents are autonomous systems that use a sophisticated blend of generative content, scripting, and behavioral mimicry to attempt to pass businesses’ user verification systems.

This type of AI scam poses particular challenges for fraud detection and prevention efforts, as AI agents can learn from failed attempts to improve their chances of success. They can also rapidly adjust their tactics in real time to best match the latest fraud prevention trends.

AI agents may offer a massive boost to fraud-as-a-service operations, placing highly sophisticated tools and techniques in the hands of low-skilled criminals. However, AI agents can also be used by businesses to combat fraud, setting up a potential “battle of the bots”.

Suggested read: From AI Agents to Know Your Agent: Why KYA Is Critical for Secure Autonomous AI

AI-mutated malware and adaptive cyberattacks

AI may also be changing how malware behaves, enabling ransomware and phishing campaigns to adapt in real time to evade fraud detection and prevention measures before launching an AI-based scam attack based on victim behavior. Cornell University demonstrated this concept with AI-driven attack frameworks capable of bypassing most antivirus systems, posing a significant threat to businesses and the general public. When sold via fraud-as-a-service schemes, these types of AI-powered tools make sophisticated fraud accessible to a far wider range of criminals.

As large language models get smarter and biometric systems become harder to spoof, the arms race continues.

Synthetic identity fraud explained

Fraud trends across industries are increasingly driven by synthetic identities. Synthetic identity fraud involves creating false identities by stitching together AI-generated headshots, fabricated addresses, and real, stolen credentials to pass identity verification and onboarding due diligence at banks, crypto exchanges, and fintechs. These ‘Frankenstein identities’ can successfully onboard unnoticed, lie dormant, and then be used to commit synthetic identity fraud, sometimes at considerable scale.

As part of a large investigation by Toronto Police called Project Déjà Vu, one person was found to have opened hundreds of accounts using synthetic identities. These identities were used to open accounts at banks and financial institutions across Ontario. The scam resulted in confirmed losses of about CA$4 million ($2.9 million).

Synthetic identity fraud detection relies on tactics such as behavioral analytics to spot unnatural behavior that suggests criminal activity, and on data discrepancy checks to identify inconsistencies in the information provided with the synthetic identity. AI and machine learning tools are crucial for protecting against this kind of Frankenstein identity, as they can keep up with the speed and volume of identities produced by criminals who are themselves using these advanced tools.

Suggested read: Synthetic Identities: When Data Becomes a Persona

Vishing with AI: exploiting victims’ trust in the people they know

Voice-cloned calls can be made to fool targets into thinking they are talking to someone else. Vishing (short for ‘voice phishing’) is where this type of AI voice scam is used to extract sensitive information from victims. Vishing attacks can be highly effective, as most people are unprepared for this sophisticated scam. Academic research on voice-cloning scams shows that people struggle to distinguish real voices from AI-generated ones. In one study, participants correctly identified voices only 37.5% of the time, meaning most AI voices were mistaken for real humans.

Fraudsters frequently clone the voice of a family member in distress, such as a daughter or grandson, to trigger an emotional reaction and request money urgently.

Research suggests female synthetic voices can be persuasive. Some experimental research has specifically examined female synthetic speech, showing that voice characteristics (tone, warmth, emotional delivery) can significantly influence user behavior and persuasion in digital interactions.

This connects to a broader design trend: many digital assistants and automated voices are intentionally feminized, partly because users often perceive female voices as warmer or more trustworthy.

Top industries at risk of fraud in 2026

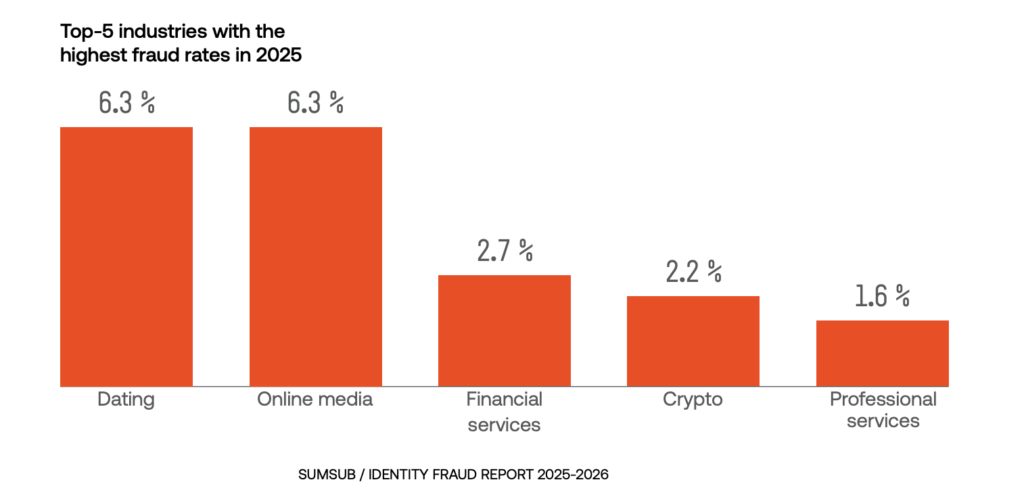

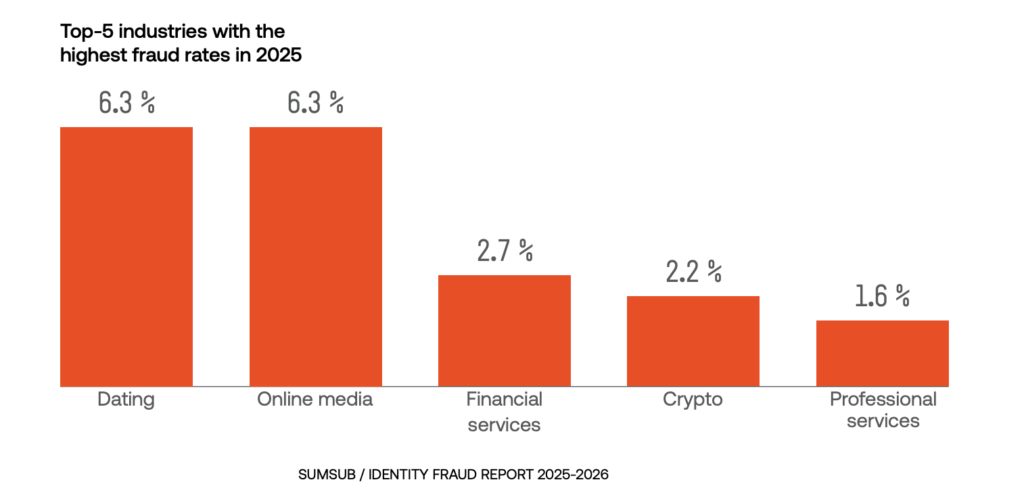

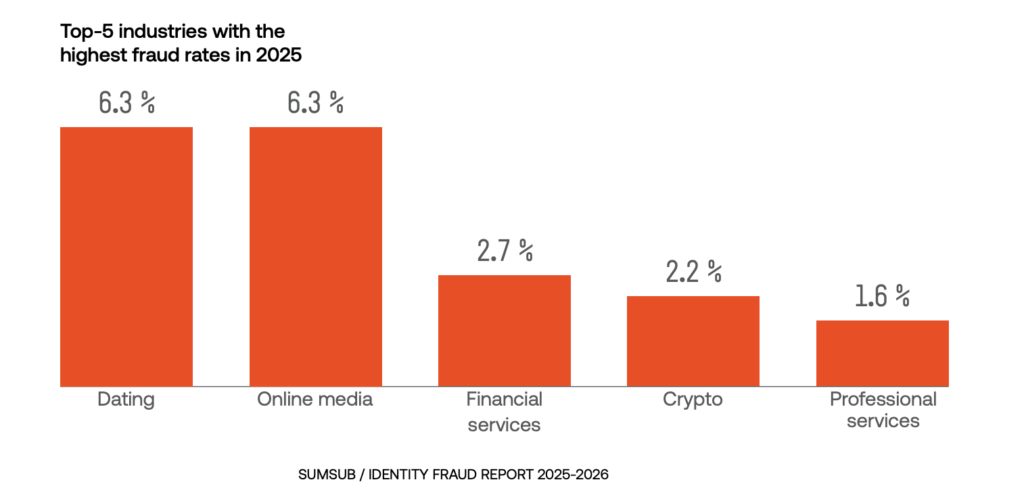

Sectors where user identity is crucial are particularly at risk, with the top-five industries most affected by identity fraud in 2025 and 2026 being dating, online media, financial services, crypto, and professional services.

Dating and online media were by far the most targeted, with each accounting for 6.3% fraud rate:

Finance and banking fraud trends for 2026 are expected to include growing use of deepfakes and AI-generated documents that challenge the effectiveness of traditional identity verification systems. Fraud in financial services demonstrates the same worrying ‘sophistication shift’ as in other sectors. This means those in the industry must rapidly adopt next-gen fraud detection and prevention solutions to protect their customers and the integrity of financial systems.

Payment fraud trends include first-party fraud (when a person uses their own identity or account while lying about their intentions or facts), such as chargeback abuse, which accounted for 16% of first-party payment fraud in 2025. Chargeback abuse occurs when a customer disputes a legitimate transaction or misuses the chargeback process to avoid payment.

Payment fraud can also include third-party fraud, such as card testing (where criminals test stolen payment card details with small transactions before moving on to larger fraud). This accounted for 17% of third-party fraud in 2025, according to Sumsub’s Identity Fraud Report:

In short, fraud in 2026 may not be more common, but it’s become more sophisticated, more accessible, and more personalized at unprecedented scales.

Financial fraud: BEC, loan fraud, and insurance

The financial landscape has changed significantly in recent years, and it’s no surprise that the landscape of financial fraud is changing with it.

Business email compromise in 2026

Business Email Compromise (BEC) is a form of fraud that involves impersonation of trusted business contacts to induce unauthorized payments, credential disclosure, invoice changes, or data sharing. Business email compromise scams have evolved with AI-enhanced email spoofing, making them harder to spot. BEC is a form of social engineering fraud that exploits people's trust to get them to take actions that benefit the fraudsters.

According to the 2025 AFP Payments Fraud and Control Survey, BEC remains the primary method used in payment fraud: 79% of organizations experienced payment fraud attacks or attempts in 2024, and 63% of respondents cited BEC as the top fraud avenue. BEC and payment fraud prevention must, therefore, be among the key focuses for businesses in 2026.

AI-enhanced insurance fraud tactics

Insurance fraud is also being supercharged by criminals’ use of AI tools. False claims and staged accidents are exacerbated by AI-generated fake evidence used in scams such as car insurance fraud, including photos, videos, and documents, making fraud investigations more complex and costly.

As the tools criminals use become more sophisticated, insurance companies need AI-powered detection that spots doctored images and forged documents instantly.

Mobile payment fraud: QR scams and takeovers

From buying a cup of coffee to paying your bills, mobile payment methods make our lives much easier. But they can also make scammers’ lives more convenient if you’re not careful, with mobile payment scams being one of the top fraud trends in banking right now.

Account takeover attacks in mobile banking

Fraudsters can use stolen credentials or exploit weak authentication to hijack mobile banking and payment accounts in account takeover attacks. Once inside, fraudsters can drain balances, make unauthorized transfers, or add new payment methods to siphon off money. This is known as ‘account takeover fraud’.

Sumsub’s 2025-2026 Fraud Report showed that account takeover was the second-most-common type of third-party fraud, with a rate of 19%, behind identity theft at 28%.

QR code scams and fake payment apps

QR codes are everywhere and are increasingly used in payments, so they must be considered in fraud prevention planning. Fraudsters are fully aware of this and can swap legitimate QR codes for malicious ones that redirect users to phishing sites or initiate fake payment requests. One reported QR code scam case involved scammers placing counterfeit QR codes in Tyne & Wear Metro parking lots, tricking commuters into sending money to fraudsters.

Cybercriminals can develop spoofed mobile payment apps that look and feel identical to legitimate ones but steal users’ data and funds. These mobile payment fraud apps often spread through unofficial app stores or phishing links. Reported in November 2024, a fake application was sent across India via WhatsApp, designed to target users of the Indian instant payment system UPI.

Social engineering in mobile payments

Fraudsters can impersonate trusted contacts or support agents via SMS or messaging apps, tricking users into approving fake transactions or sharing their one-time passwords. This is called ‘social engineering fraud’ as it relies on techniques including social interaction and psychological manipulation, or ‘engineering’ to trick people into making transactions or sharing sensitive information.

Suggested read: What Is Social Engineering Fraud and Why Is It on the Rise?

Real-time payment fraud in 2026

An important payment fraud trend to be aware of this year is the growing risk of scams targeting real-time payment systems. As more apps and platforms support instant transfers between accounts, fraud prevention measures must operate in real time, as the window to detect and stop suspicious activity is extremely limited.

These systems increase the impact of fraud, as funds can be moved quickly to criminally controlled accounts, often making recovery difficult. This is particularly evident in both account takeover (ATO) fraud and authorized push payment (APP) scams, where victims are manipulated into approving transactions themselves.

Given the widespread adoption of digital payment methods—for example, half of UK adults now regularly use mobile payments—these trends require close monitoring. As real-time payments become more common, the speed and irreversibility of transactions continue to challenge traditional fraud controls, reinforcing the need for continuous and adaptive risk detection.

Crypto fraud: Pig butchering and new schemes

As the adoption of digital assets continues to grow, so does the creativity of bad actors exploiting emerging gaps for crypto fraud. Like general trends in fraud, fraud-as-a-service and AI are making cryptocurrency fraud more complex, personalized, and manipulative than ever before. The crypto sector is also a popular target for criminals using tactics such as pig butchering scams, pump and dump schemes, and crypto drainers.

What is pig butchering, and how does it work?

One of the most damaging new schemes is ‘pig butchering scams’. The term derives from the Chinese phrase “sha zhu pan” (杀猪盘), meaning “pig butchering.” It originated in China and has since become associated with large-scale fraud operations across Southeast Asia. The name refers to the practice of scammers “fattening up” victims by gaining their trust over time before “slaughtering” them—i.e., taking their funds.

In a pig-butchering scam, criminals build relationships with victims over an extended period and then persuade them to send money, often to fraudulent investment platforms. The initial contact is usually made through dating apps or messaging services.

According to Chainalysis, revenue from these investment scams grew by nearly 40% year-over-year, with many pig butchering fraud operations linked to organized criminal networks using AI to improve targeting and dialogue.

These criminal networks often involve pig butchering scam factories, which are large-scale cyber fraud operations that use human trafficking and forced labor for manpower. One such factory ran out of the Isle of Man, where a seaside hotel and former bank offices were used as the base for dozens of Chinese workers using computers and high-speed broadband to target victims around the world.

Suggested read: Pig Butchering: Inside the Billion-Dollar Scam Factories | “What The Fraud?” Podcast

Crypto drainer kits: Emptying wallets at scale

Crypto drainer kits are also booming. These malicious scripts are designed to empty cryptocurrency from a victim’s wallet to a wallet controlled by an attacker. Victims of this type of crypto fraud can be tricked into connecting their wallets to fraudulent platforms, like fake NFT marketplaces or DeFi services.

These kits are often sold as part of fraud-as-a-service packages on the dark web, giving this type of scam an incredibly low barrier for entry and thus posing a significant challenge for fraud prevention. According to the Chainalysis Crypto Crime Report, drainers played a key role in the $2.2 billion stolen from victims in 2024.

AI-powered pump-and-dump in 2026

While pump-and-dump schemes are nothing new, AI has given crypto scammers vastly more options. Pump-and-dump means fraudsters buying crypto assets at a relatively low price, then using various tactics to promote the assets, causing more people to buy the same type of assets to artificially ‘pump up’ the price before the fraudsters quickly sell off (‘dump’) their assets at a tidy profit.

Fraudsters can now use bots, synthetic social media accounts, and deepfakes of key opinion leaders in ‘pumps’ to drive up the prices of low-cap tokens. Once the price spikes, the crypto fraud scammers ‘dump’ their overvalued holdings, leaving retail investors with next to nothing. According to Chainanalysis, 3.59% of all tokens launched in 2024 (74,037 out of 2,063,519) displayed patterns of pump-and-dump schemes.

Pump-and-dump schemes are not limited to virtual assets.

In December 2025, four individuals in Australia were sentenced to terms of imprisonment ranging from 14 months to 2 years for orchestrating a coordinated scheme to inflate the value of Australian stocks before offloading them at artificially high prices. They were also each fined between AUS$8,015 (approx. US$5,317) and AUS$22,270.11 (approx. US$14,827) for a combined total of AUS$59,779.50 (approx. US$39,798). They could have faced up to 15 years in prison and fines exceeding $1 million, showing the seriousness with which these offenses are treated.

Suggested read: Top Crypto Scams to Be Aware of in 2026: A Guide for Businesses and Users

Dating industry: Romance scams, deepfakes, and emotional traps

Romance scams, unfortunately, remain a cruel staple of the fraud landscape, made even more realistic and personal by recent advances in AI. Dating platforms are particularly high-risk environments, with the Sumsub recent Identity Fraud Report highlighting that they lead in fraud rates with a 6.3% share—more than double the risk seen in the financial services sector. While typically classified as social engineering, romance scams can also lead to identity fraud, for example, when victims’ personal information is later reused for account takeover or the creation of synthetic identities.

These scams often start on dating apps, social media, or chat platforms, where fraudsters can create believable profiles, sometimes using deepfake photos or videos to appear real. Once trust is established, the fraudster can create an elaborate story, such as a sudden medical emergency, to quickly extract money from a smitten victim. Romance scammers often carry out crypto fraud, urging victims to invest in fake crypto projects or send funds to wallets, exploiting the victim’s desire to help or perhaps build a future with a romantic partner. Romance fraud is also often used in pig butchering scams.

In October 2024, Hong Kong police arrested 27 people involved in a large-scale deepfake romance scam. The syndicate used AI face-swapping and voice-changing technology to create convincing fake personas on dating platforms, then lured victims into fake cryptocurrency investments with fabricated profit records. Victims were convinced by real-time deepfake video calls and lost millions.

To avoid these scams, users should always remember to cross-check names, photos, and bios online—scammers often use stolen identities. Users need to stay alert, and platforms should remind them to be especially cautious if someone asks for money or personal information, even in subtle ways.

Suggested read: Fraud Is in the Air: The Growing Threat of Online Romance Scams

Existing fraudster tactics that remain key threats in 2026

Alongside the new and emerging threats covered above, it is also important to stay aware of more established scams that are still commonly used by fraudsters, including:

- Fraud-as-a-service: FaaS has democratized cybercrime, allowing virtually anyone with basic technical skills to launch sophisticated attacks using toolkits often bought on the dark web.

- AI-driven scams: Impersonations generated with AI can deceive consumers and businesses, and are expected to continue in the years to come.

- Synthetic data attacks: AI-generated fake transaction histories and credit scores can be used to bypass identity verification.

- Subscription renewal scams: Fake renewal notices may trick victims into making payments to fraudsters.

- Formjacking: Malicious code on payment forms can steal card details during legitimate transactions. Online payment fraud methods such as these can be hard for users to spot, as there are often no immediate red flags that a form has been jacked.

- Click fraud: AI bots can mimic human clicks to inflate ad costs for businesses and skew metrics.

- Fake crypto exchanges: Counterfeit platforms can steal user deposits before disappearing.

- Flash loan attacks: These exploit vulnerabilities in DeFi smart contracts, using liquidity from flash loans to manipulate cryptocurrency prices and steal funds from protocols.

- Ransomware & blackmail: Extortion demands are often made in crypto following data encryption attacks.

- Data poisoning: Fake data can be fed to AI fraud detection systems to weaken fraud prevention and detection defenses.

Emerging fraud threats to watch in 2026

AI and other recent technological changes have led to nothing short of a revolution in fraud, enabling it to use advanced technologies and exploit systemic vulnerabilities at a scale never seen before.

Here are some of the most concerning new trends in 2026:

- The ‘sophistication shift’. In 2025, sophisticated fraud increased by 180%, using advanced deception techniques, social engineering, and AI-generated identities to circumvent anti-fraud systems.

- The emergence of AI-assisted forgery. 2% of fake documents involved AI-assisted forgery last year, up from 0% the year before.

- Fraud rates increase in Africa, APAC, and the Middle East. While fraud rates fell in the EU and the US, they rose by 9.3% in Africa, 16.4% in APAC, and 19.8% in the Middle East.

- AI fraud agents are here to stay. In 2025, the first reports of AI fraud agents emerged, autonomous systems used by scammers to commit fraud. These systems can operate independently, learning from previous experiences to evolve and adjust their strategies in real time.

- Fraudsters have graduated to telemetry tampering. This is where fraudsters target the data pipelines and signals underlying identity checks, rather than just the documents used for those checks. Criminals are now attacking the ‘telemetry layer’ within software and network systems that collect, transmit, and initially process user data.

Staying ahead of fraud trends: How users and businesses can stay safe online

The 2026 fraud landscape is increasingly complex and often frightening. Fraudsters are harnessing powerful tools we just discussed to bypass our traditional defenses and shatter the very understanding of who we can trust.

To stay ahead of these evolving fraud trends, both businesses and individuals need to be proactively cautious. This means adopting essential fraud prevention measures, such as staying vigilant by verifying identities, using scam-tracking tools, and being cautious about anything that feels wrong, like unsolicited or urgent requests for money.

For individuals, staying safe increasingly comes down to slowing things down and verifying before acting. This means double-checking requests for payments or sensitive information, avoiding links or messages from unknown sources, enabling multi-factor authentication on key accounts, and keeping devices and apps up to date.

Even small habits—like pausing before approving a transaction or confirming a request through a separate channel—can significantly reduce the risk of falling victim to increasingly sophisticated scams.

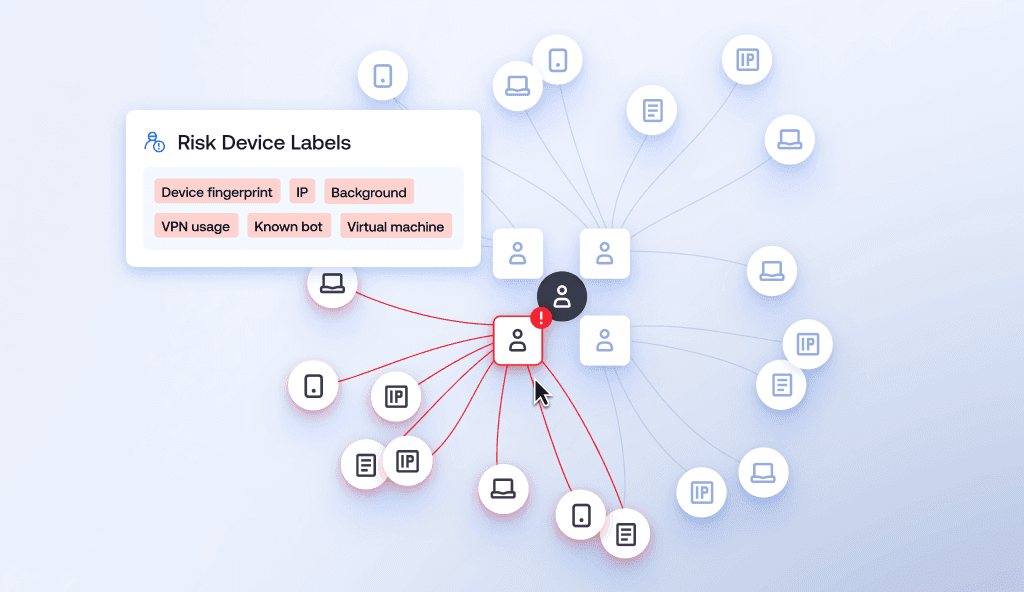

For businesses, a multi-layered, adaptable strategy is key to modern fraud prevention, and Sumsub champions this through advanced fraud prevention solutions. By combining AI-powered real-time monitoring, adaptive identity verification, device intelligence, and integration of biometric checks, companies can detect suspicious activity before it has a chance to escalate.

Suggested read: Fraud Detection and Prevention—Best Practices for 2025

Fraud prevention strategies for businesses

In 2026, the traditional defenses that have kept us largely safe up until now are also facing new and rapidly changing threats. Fraud prevention solutions must also evolve to keep pace.

Multi-layered fraud detection tools

The good news is that fraud detection and prevention practices are evolving just as rapidly as fraud to keep people safe. Companies should implement comprehensive, multi-layered fraud prevention solutions that can detect fraud at various stages. And fight AI with AI.

Key fraud prevention tools to fight scammers in 2026 include:

- Advanced identity verification solutions

- Biometrics

- Transaction monitoring

- Behavioral pattern checks

- Device fingerprinting

- Background checks

- Password protection

- Deepfake detection

- Public education about staying safe

- Collaboration between financial institutions, law enforcement, and tech firms

Identity verification and biometric defense

To stop fraud in 2026, verification processes must prove a person is physically real, not just that their data is correct. As AI-generated fakes and synthetic identities become more convincing, simply checking an ID card is no longer enough.

The most effective approach is to verify the user's physical presence during the application or claim process. This means moving beyond static data checks to look for "liveness" - subtle physical cues like skin texture, 3D depth, and natural movement that an AI-generated image cannot yet replicate perfectly.

By combining traditional document verification with real-time physical analysis, organizations can block account takeovers and fake identities without adding unnecessary steps for genuine customers.

Fraud prevention solutions for 2026

Some solutions that companies need to keep up with current fraud prevention trends include:

- User Verification

- Biometric identity verification

- Business Verification

- Transaction Monitoring

- Email & Phone Risk Assessment

- Liveness Detection

- Device Intelligence

- Risk Scoring

- Fraud Network Detection

- Behavioral Monitoring

Fraud trends 2026 FAQ

-

What is the top fraud trend in 2026?

The move toward sophisticated fraud is the key current fraud trend to watch in 2026. This refers to criminals’ use of advanced, AI-powered techniques to make their fraud harder to detect. Key emerging fraud trends within this broader trend include scammers deploying AI fraud agents and creating hard-to-detect synthetic identities that blend real and fake information.

-

What is pig butchering, and how does it work?

A pig-butchering scam involves criminals building a fake, often romantic, relationship over time to lure victims into investing in fraudulent crypto schemes, only to drain their funds once trust is established. The term ‘pig butchering scam’ came to be used as it evokes an image of fattening a pig before slaughtering it.

-

How is AI used in modern fraud and scams?

Fraudsters can carry out a variety of AI scams. These include deepfake scams, in which criminals create highly convincing documents, videos, advertisements, and other media as part of their operations. Other applications include AI voice scams (where fraudsters call victims using recreations of the voices of loved ones and other trusted individuals), which can be used to convince victims to hand over funds to criminals or to reveal sensitive information (known as ‘voice phishing’ or ‘vishing’). AI is also commonly used by criminals for synthetic identity fraud (where real and false information are blended to create hard-to-detect fake identities).

-

What is Business Email Compromise?

Business Email Compromise (BEC) is a form of fraud in which fraudsters impersonate senior employees or trusted partners (such as suppliers) of a business to trick employees into sharing sensitive information or making payments from the company's funds to the scammers.

-

What is account takeover fraud?

Account takeover fraud is where criminals take control of a victim’s online accounts (e.g., for banking, email, or social media) and use them to steal funds, make unauthorized purchases, or carry out other forms of fraud. This is also sometimes called an ‘account takeover attack’. An account takeover can have serious consequences for the victim and the platform that hosts the account, so account takeover prevention is a core duty for businesses.

-

What are the trends in check fraud?

Check fraud is rising again, driven by stolen mail, check washing, and the use of altered or counterfeit checks. Criminals are also combining traditional methods with digital tools, making detection harder and increasing losses.

Relevant articles

- Article

- 3 days ago

- 16 min read

KYC verification explained: learn how Know Your Customer compliance works, including key steps, verification types, global regulations, and best prac…

- Article

- Mar 27, 2026

- 8 min read

Hospitality fraud trends are evolving. Learn the threats within the hospitality sector today and what can help stop scams, chargeback abuse, and loya…

What is Sumsub anyway?

Not everyone loves compliance—but we do. Sumsub helps businesses verify users, prevent fraud, and meet regulatory requirements anywhere in the world, without compromises. From neobanks to mobility apps, we make sure honest users get in, and bad actors stay out.