- Mar 02, 2026

- 11 min read

How to Stay Ahead of Deepfake Evolution in 2026

Check out how AI deepfakes are evolving and discover proven strategies for detecting and preventing deepfake threats to protect your business.

Ever since a comical AI-generated video of Will Smith eating spaghetti went viral in 2023, developers have kept generating updated videos of the celebrity enjoying pasta as a benchmark for the development of the technology. Recent iterations on this experiment are almost indistinguishable from reality. Beyond the humor, however, the trend is concerning: deepfake technology is advancing quickly. While criminals rarely invent new technologies, they often adopt them faster than many organizations can adapt.

There is now an easy-to-use and powerful ecosystem of deepfake tools capable of generating hyper-realistic audio, images, and video in seconds. Today, anyone with a smartphone can create synthetic content convincing enough to fool people, processes, and even security systems.

This poses a serious threat to businesses, with deepfake scams already being used to impersonate executives, bypass identity verification, and manipulate financial processes.

Understanding deepfake evolution

What are deepfakes?

Deepfakes are synthetic media in which a person's likeness is replaced with someone else's through advanced artificial intelligence techniques, often used to create convincing but false images or videos.

The idea behind a deepfake is nothing new. After all, people have been manipulating photographs and video to deceive others since the early days of photography and film.

However, deepfakes themselves as technology are relatively new, so what exactly is a “deepfake”?

The word itself is a portmanteau of “deep learning” and “fake,” and was first used in 2017 on Reddit. However, deepfake meanings have become broader over time. The word can now refer to AI-generated video, voice cloning, face swaps, and even fully synthetic identities.

Deepfake AI relies on machine learning models trained on large datasets of images, videos, or audio samples. These models then learn to reproduce patterns so convincingly that the final result can be almost indistinguishable from reality.

The evolution of deepfakes has followed the same trajectory as AI in general, with rapid improvements in quality in parallel with wider accessibility.

How AI powers modern deepfakes

To understand how deepfakes work and how to combat them, it helps to look at the underlying technology. Most systems use deep neural networks such as generative adversarial networks (GANs), as well as autoencoders and decoders, to generate images, or they may use diffusion models.

In a GAN, one AI generates content, while another tries to detect whether it is fake. Over thousands of iterations, the generator becomes more skilled at producing seemingly realistic results and can create an AI deepfake from just a few seconds of video or audio.

Modern deepfake AI tools, however, often rely on diffusion models, which can learn to generate new data such as images, audio, or video from scratch. This can create convincing deepfakes that criminals may use for fraud, social engineering, and identity deception at scale.

Deepfake statistics and trends

Recent deepfake statistics reveal explosive growth in synthetic media, with alarming implications for fraud risks. According to the Sumsub 2025 Identity Fraud Report, 11% of all fraud globally in 2025 was deepfake fraud. Deepfake fraud attempts nearly doubled in the UK, while the Maldives experienced a staggering 2,100% year-on-year growth in deepfake attacks. The development and accessibility of deepfake technology have also played critical roles in the fraud sophistication shift and subsequent trust crisis.

New generative models are also being released at a rapid pace, with major commercial and open-source upgrades coming almost monthly. This allows fraudsters to change their tactics continuously as tools become both more powerful and more accessible.

The scale of deepfake-driven fraud is approaching a crisis point. Research from Deloitte’s Center for Financial Services, cited in the Wall Street Journal, indicates that deepfake scams could cost the financial sector US$40 billion by 2027, while North Korean bad actors are using deepfakes to infiltrate organizations and raise money for the widely sanctioned regime.

Meanwhile, customer onboarding systems are seeing more advanced attempts to bypass biometric checks with synthetic identities and media, which have rapidly moved from a novelty to a threat.

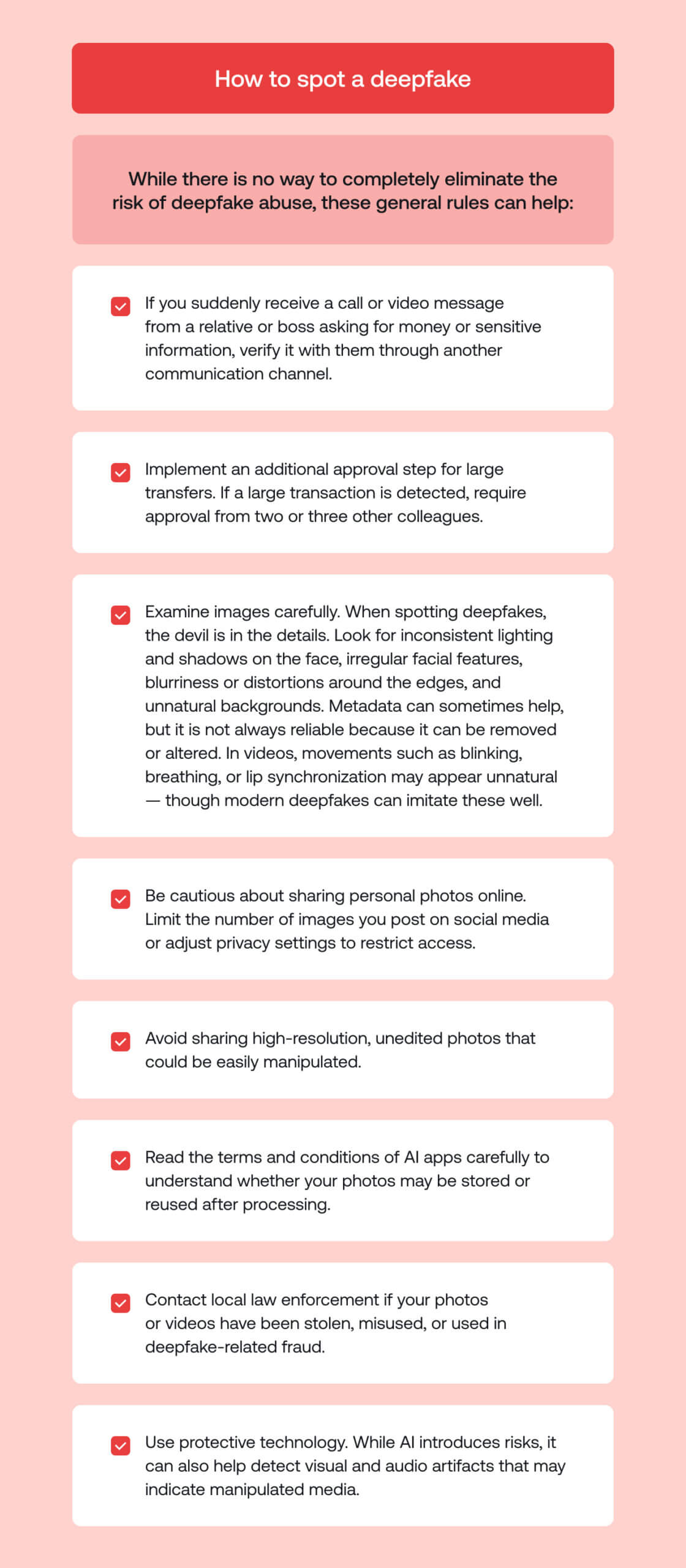

How to spot a deepfake

While effective tools and technology are essential for combating deepfake-driven fraud, individual education about the signs of deepfakes is crucial for maximizing your chances of staying safe. Knowing how to spot a deepfake can help employees and the general public alike avoid falling for common scams.

As deepfakes make scammers' fraud attempts more convincing, fraud becomes more personal. Generative AI is fueling cruel romance scams that rob victims of vast sums of money and toy with their emotions. One California woman lost more than $81,000 as well as her paid-off home after falling victim to a sophisticated AI-driven romance scam. The stolen funds are often sent to scam compounds known to use forced labor.

Warning signs of a deepfake include unusual blinking patterns, mismatched lighting, a preference for exact lengths in recordings (e.g., 10 seconds vs 27.3 seconds), background noise, unnatural facial movements, or audio that doesn’t perfectly match lip movements. However, these indicators are becoming harder to notice as quality improves, but if your gut is telling you something doesn’t feel right, it’s worth listening to it.

The safest approach is to treat any unexpected request, especially involving money or sensitive data, as potentially manipulated and to verify it independently.

While there is no way to completely eliminate the risk of deepfake abuse, these general rules can help:

- If you suddenly receive a call or a video message from a relative or boss asking for money or sensitive information, double-check it with them using other messengers.

- Implement a tool for additional approval for large transfers. If a large transaction is detected, the tool will request approval from two or three other colleagues.

- Examine images thoroughly. When spotting deepfakes, the devil is in the details, and you’ll often be able to recognize one by examining the direction of light and shadows on the face, inconsistencies in facial features, blurriness or distortions around the edges of the face, unnatural backgrounds, etc. You can also check the photo’s metadata to see if it has been manipulated or altered. In a video, deepfakes may not include natural blinking or breathing movements.

- Be cautious about sharing personal photos online. You can limit the number of personal photos you share on social media, or adjust privacy settings to restrict who can access your photos.

- Avoid sharing high-resolution, unedited photos that could be easily manipulated.

- Thoroughly read the terms and conditions of all AI apps, ensuring they cannot use your photos after your use of the app itself.

- Contact local law enforcement agencies when your photos or videos have been stolen and/or used in an inappropriate manner, or if you have fallen victim to deepfake-related fraud.

- Utilize smart technology. While AI may pose danger, it’s also a remedy. AI can be used to detect certain visual or audio artifacts in deepfakes that are absent in authentic media.

Visual indicators of fake content: Detection of deepfake images

When examining suspicious images, look for these giveaways of a deepfake:

- Unnatural skin textures or overly smooth facial features

- Inconsistent lighting or shadows

- Distorted or blurred backgrounds

- Strange reflections in glasses or eyes

- Glitches around hairlines, ears, or jawlines

- Unusual teeth rendering or oddly shaped mouths

- Resolution differences between the face and the surrounding image

Still images may appear flawless at first glance, but subtle distortions often reveal signs of manipulation.

Visual indicators of fake content: Detection of deepfake videos

When trying to detect deepfake video manually, common visual warning signs include:

- Distorted or blurry backgrounds that shift unnaturally

- Inconsistent lighting or shadows on the face or surrounding objects

- Unnatural skin textures, overly smooth areas, or strange reflections

- Irregular blinking patterns

- Mismatched lip movements that don’t fully align with speech

- Glitches around the hairline, jawline, or ears, where models may often struggle

- Flickering facial features

- Unrealistic interaction with glasses, jewelry, or facial hair

- Unrealistic teeth rendering or oddly shaped mouths

- Resolution differences between the face and the rest of the video

However, relying on the human eye is becoming increasingly futile. Modern deepfake detection tools now analyze micro-signals, making it easier to detect more sophisticated deepfake manipulation.

Detection of audio deepfakes

Audio manipulation is often harder to recognize than video, but there are still common indicators that can help in deepfake voice detection. Practical signals to watch for include:

- Unnatural rhythm or pacing, with awkward pauses or inconsistent flow

- Emotionless or overly flat delivery that lacks normal human variation

- Strange background noise changes

- Abrupt shifts in tone or accent

- Metallic or robotic sound quality

- Pronunciation errors on complex or unfamiliar words

- Repetitive speech patterns that feel scripted

Effective deepfake audio detection may use AI systems that analyze hidden acoustic features, such as frequency anomalies and biometric voice patterns.

Deepfake threats to businesses

Like other forms of AI fraud, deepfake fraud poses a considerable risk to businesses because it can easily increase the level of sophistication in a scam. While businesses may be skilled at spotting traditional attacks, like phishing attempts and account takeovers, deepfakes are making it harder than ever to trust that people are who they say they are.

Because these attacks mimic legitimate behavior businesses would never ordinarily question, they pose a very real risk of bypassing traditional controls. Without updated detection systems, many organizations have little defense.

Major threats include:

- Business email compromise (BEC) enhancement: Deepfake audio or video can reinforce traditional phishing emails, making fraudulent payment requests more convincing.

- Fraudulent payment authorization: Criminals may use cloned voices or video calls to impersonate executives or finance staff and approve urgent wire transfers.

- Account takeover support bypass: Deepfake audio or video can be used to trick customer support teams into resetting passwords or disabling security controls.

- Recruitment fraud: Fake candidates using deepfake videos or synthetic identities may pass remote interviews to gain access to internal systems.

- Brand and reputation damage: Manipulated videos of company leaders making false statements can spread quickly, damaging trust and share value.

- Market manipulation: Fake announcements or fabricated executive messages can temporarily influence stock prices or investor confidence.

- Bypassing biometric verification: Sophisticated deepfake video or audio may attempt to defeat liveness checks or voice authentication systems.

- Impersonation of CEOs and senior executives: The most high-risk scenario involves deepfake video calls or voice cloning used to impersonate CEOs or CFOs, instructing employees to transfer funds or disclose sensitive data under the guise of urgent executive authority. Let’s take a closer look at this one.

Executive impersonation attacks

Among the most damaging scenarios is executive impersonation, where an “executive” may request huge fraudulent transfers or damage a company’s reputation. In 2024, attackers used deepfake video and audio of a senior manager to pressure an employee to transfer HK$200 million (US$25 million) in one of the world’s biggest deepfake scams to date.

In March 2025, a scammer impersonating a company’s CFO also contacted a finance director on WhatsApp, asking them to meet with a deepfaked CEO and other authority figures over Zoom. The victim was then told to transfer funds from Singapore to a bank in Hong Kong. Over US$494,000 was transferred, but the victim later suspected it was a scam after the fraudsters requested an additional US$1.4 million.

Thankfully, the victim alerted the bank, which notified Singaporean and Hong Kong authorities, and the transaction was reversed. This attack was possible with early 2025 deepfakes, when AI technology was less advanced than today.

Thankfully, AI-enhanced fraud detection tools can help detect potential deepfake scams, such as determining whether a voice or video is AI-generated.

Verification and bypassing KYC

Another major risk is identity onboarding, with deepfakes now allowing fraudsters to bypass outdated legacy KYC checks through AI-generated IDs that can be made for as little as US$15 in half an hour.

This makes deepfake detection for identity verification essential in a modern business environment. Legacy systems that only check static images are no longer enough, as fraudsters can generate synthetic identities that pass basic verification.

Vulnerable KYC systems also make it easier for money mules to infiltrate networks, facilitating money laundering and having huge consequences for regulated and non-regulated entities—whether in the form of major fines or significant reputational harm.

Modern KYC processes are therefore recommended to incorporate a multi-layered approach and advanced deepfake analysis capable of identifying subtle inconsistencies and patterns invisible to the human eye.

Suggested read: Liveness Detection: A Complete Guide for Fraud Prevention and Compliance

Deepfake detection technology for businesses

As fraud tactics grow more sophisticated, businesses should move beyond generic content screening and deploy purpose-built deepfake detection tools and deepfake detection software. Modern deepfake detection systems typically combine convolutional neural networks (CNNs) for visual artifact analysis, anti-spoofing, and liveness detection algorithms to prevent presentation attacks, and multimodal AI models that analyze video, audio, and behavioral signals together.

CNN-based models can be used to identify subtle pixel-level inconsistencies, lighting anomalies, and artifacts that indicate synthetic generation. At the same time, anti-spoofing systems can detect attempts to bypass biometric verification through screen replays, injected video streams, 3D masks, or AI-generated overlays.

Deepfake detection software is also shifting toward behavioral and contextual analysis, monitoring micro-movements, response timing, device integrity signals, and session metadata.

However, detection models can decay quickly. Systems trained on last year’s GAN-based deepfakes may struggle against newer diffusion-based or real-time face-swapping techniques. As generative models evolve and are continuously fine-tuned, static detection pipelines lose accuracy.

To stay ahead of the deepfake evolution, businesses need detection architectures that support continuous retraining, adaptive model updates, and real-time risk scoring rather than fixed rule-based systems.

AI-powered detection solutions

Modern AI deepfake detection platforms use continuously retrained machine learning models. They use ensembles that evaluate multiple aspects from facial micro-expressions to background noise. While AI is making fraud more accessible and convincing, it is also powering advanced liveness detection, fraud detection, document verification, and more to keep businesses and users safe.

AI deepfake detection systems can be integrated into onboarding flows, call centers, or transaction systems via a deepfake detection API or SDK. Real-time analysis helps block manipulated media before damage occurs.

Effective solutions are continuously improved as new deepfake fraud techniques emerge.

Multi-layer defense strategies

No single tool can provide full deepfake protection. Effective deepfake fraud prevention requires layered defenses, with biometric checks, behavioral analytics, device intelligence, and AI-based media verification working together.

Organizations should adopt a holistic strategy combining liveness detection, strong authentication, document authenticity signals, graph neural network (GNN) fraud detection, and continuous model improvement. As generative AI evolves rapidly, attackers adapt their techniques, requiring defenses to be regularly updated based on emerging fraud patterns.

This makes it easier to stay safe from fraudsters who constantly shift their tactics to cause harm.

Suggested listen: Inside Sumsub's 2025 Identity Fraud Report

Protecting your organization

AI introduces new forms of impersonation risk that organizations must address to achieve effective deepfake protection.

Policies should assume that digital interactions may be manipulated, and procedures for approving payments, changing bank details, or resetting accounts should include independent verification steps.

Within the next few years, regulators may explicitly reference synthetic media and impersonation AI as components of identity fraud risk, particularly for AML-obliged organizations, and expect firms to deploy advanced AI fraud prevention controls as part of their broader compliance frameworks.

Defending against AI-enabled fraud is an enterprise-wide responsibility that requires organizations to rethink not only who they trust, but how trust is established and verified.

Suggested read: Comprehensive Guide to AI Laws and Regulations Worldwide (2026)

Employee training and awareness

Many deepfake scams succeed because employees are unprepared for fraud’s latest sophistication shift, where just because you can see or hear someone, it doesn’t mean they’re who you think they are. Regular training should explain how AI fraud works, demonstrate examples, and establish clear reporting channels.

But understanding deepfake scams goes a long way toward combating them through independent actions. When a Ferrari executive was contacted by someone who appeared to be the company’s CEO, despite appearing incredibly convincing and even mimicking the CEO’s southern Italian accent, the executive felt something was still wrong. The executive acted on intuition and asked a question that only the CEO would know the answer to. The scammer couldn’t answer, and Ferrari was spared a potentially major financial loss.

Verification protocol best practices

To successfully combat deepfake scams, businesses should employ multiple layers of protection, including AI-powered analysis, biometric authentication, and real-time behavior monitoring. These measures help secure the entire user lifecycle—from onboarding to transactions—ensuring robust defense against evolving threats. The best ways for businesses to spot deepfakes, therefore, include:

- Machine learning and AI. Machine learning and AI algorithms, such as Sumsub’s Liveness Detection, can outperform humans in spotting enhanced photos. Moreover, in October 2023, Sumsub released “For Fake’s Sake”, a set of machine learning-driven models that enable the detection of deepfakes and synthetic fraud. This tool is available for free download and use by all.

- Behavioral analytics. This process monitors unusual patterns of behavior, such as multiple accounts opened with the same Social Security Number or inconsistencies in identity information.

- Fraud network detection. You can uncover interconnected patterns of suspicious activity on your platform using Sumsub’s AI-powered Fraud Network Detection solution. This tool provides you with the ability to identify fraud networks before the onboarding stage through AI, allowing you to apprehend an entire fraudulent network rather than just a single fraudster.

- Device Intelligence. Every verification session generates device signals: device fingerprint, IP address, and geolocation. Fraudsters often share infrastructure. The same device or IP appearing across many verification attempts signals fraud, regardless of whether the face looks real. When device signals flag a session as fraudulent, the liveness check is examined more closely. If the deepfake detector passed it, there’s a false negative to learn from.

- Document analysis. Fraudulent documents have their own detection patterns: inconsistent fonts, incorrect security features, and reused templates. When document fraud correlates with a face that passed deepfake detection, we have another signal.

- Injection detection. Deepfake generation and injection are separate problems with separate toolchains. A fraudster using a cutting-edge generator might still inject it using a well-known method. A new injection method might be used with an older, detectable deepfake. Injection detection works by identifying artifacts of virtual cameras, screen replays, emulators, or manipulated data streams rather than analyzing the face itself.

Verification protocol for financial institutions

Financial institutions unsurprisingly remain prime targets for fraudsters, making deepfake fraud prevention for banks especially important. Best verification practices to account for the development of deepfake technology include:

- Multi-factor authentication for any high-risk transaction

- Call-back procedures using pre-registered numbers

- Real-time media verification during customer interactions

- Advanced analysis to detect anomalies

- Strict approval workflows for all payments

AI fraud detection in banking systems must now evaluate not only transaction data but also the authenticity of the people initiating those transactions.

Deepfake threats are evolving quickly, and detection models are degrading just as fast. To stay effective, organizations need solutions that update in real time, such as using AI-powered Liveness Detection to help tell fraudulent fiction from reality.

FAQ

-

What is a deepfake?

A deepfake is AI-generated or AI-manipulated media that generally imitates someone’s appearance or voice. It can include face-swapped videos, cloned audio, or fully synthetic identities designed to look authentic.

-

Are deepfakes illegal?

Deepfakes are not inherently illegal as a technology. However, certain jurisdictions, such as Italy and some US states, have introduced legislation to prosecute those who use deepfake technology to cause harm.

-

How do deepfakes work?

Deepfakes are often made using machine-learning models trained on real images or audio, like generative adversarial networks. These models can learn to reproduce a person’s likeness and then generate new, realistic content.

-

How can I detect a deepfake?

Basic signs include visual glitches and unnatural audio, but advanced fakes may require specialized deepfake detection tools that analyze content at a technical level rather than relying on human judgment.

-

What is deepfake technology?

Deepfake technology refers to AI systems capable of creating synthetic media that mimics real or fictional people. Deepfake AI technology may be used in entertainment, but also for fraud and deception.

Relevant articles

- Article

- 2 weeks ago

- 8 min read

Hospitality fraud trends are evolving. Learn the threats within the hospitality sector today and what can help stop scams, chargeback abuse, and loya…

- Article

- 1 week ago

- 18 min read

What is Sumsub anyway?

Not everyone loves compliance—but we do. Sumsub helps businesses verify users, prevent fraud, and meet regulatory requirements anywhere in the world, without compromises. From neobanks to mobility apps, we make sure honest users get in, and bad actors stay out.