- Mar 20, 2026

- 15 min read

AI Deepfakes and Creator Economy Fraud: Detection & Protection Guide 2026

AI fraud in the creator economy: Ways to detect it, protect identity, and stay ahead of voice cloning and impersonation scams.

Since 2020, digital creator jobs in the United States alone have increased more than sevenfold, with millions of creators around the world monetizing their content. The global creator economy is booming and is estimated to grow at a CAGR of 23.3% from 2025 to 2033.

However, the same technologies driving the creator economy are also introducing new risks. One of the most significant threats is deepfake fraud, where synthetic media generated by AI is used to impersonate real people.

Recent advances in AI technologies have made it cheap, quick, and easy to generate synthetic audio, video, and images that mimic a person so convincingly that they can trick their loved ones. These are “deepfakes.” As creators upload so much identifying data that can then be fed into deepfake tools, it can enable influencer fraud, where attackers impersonate creators to do whatever they wish, whether it’s promoting investment scams or appearing in a deepfaked pornographic video.

The rise of AI-powered fraud in the creator economy

Creators face a growing threat to their security and brand reputation. Social media platforms, meanwhile, are coming under increased regulatory pressure to tackle these threats. Fraud prevention must now account for impersonation attacks coming from synthetic media and social engineering attempts looking to exploit both creators and their audiences.

How AI is changing the threat landscape for creators

Today, creating a convincing deepfaked video or voice recording takes little more than publicly available images or audio clips. Creators by nature have a strong online presence, which means there is plenty of readily available material for fraudsters to make a deepfake.

In 2026, fraudsters can use synthetic videos or voice clones to mimic just about anyone, from creators to executives, and steal not only their likeness but also the trust people have in them.

Recent viral AI videos recreating scenes with characters from Stranger Things demonstrate how easily generative tools can now fabricate convincing synthetic footage featuring well-known actors, demonstrating just how easy it is for convincing manipulated media to spread rapidly on social platforms.

These developments are reshaping how public figures, including creators and platforms, approach fraud prevention. Traditional identity checks and manual moderation are no longer sufficient to identify manipulated media at scale.

Why creators are prime targets for deepfake fraud

Creators and influencers are attractive targets for fraudsters not only because they share high-quality images, videos, and voice recordings—enabling convincing deepfakes—but also because they maintain trusted relationships with engaged audiences.

❗This combination of accessible content and direct influence makes it easier for attackers to impersonate them, manipulate followers, and execute scams at scale.

Common deepfake scams in this industry, therefore, involve impersonating influencers to promote investment opportunities, potentially fake or dangerous products, or using social engineering to facilitate future fraud attempts.

As the recent Giselle Bundchen deepfake scam in Brazil has shown, where deepfakes of the supermodel were used to promote non-existent products on Instagram, this can lead to losses of millions of dollars as well as significant reputational damage.

As AI tools continue to evolve, a key challenge for platforms is detecting the huge volume of manipulated media to keep creators and audiences safe from increasingly sophisticated forms of influencer fraud.

Suggested read: How to Stay Ahead of Deepfake Evolution in 2026

Understanding deepfakes: Types and mechanics

According to the EU AI Act, a “deep fake” refers to AI-generated or manipulated image, audio, or video content that resembles existing persons, objects, places, entities, or events and would falsely appear to a person to be authentic or truthful.

For creators, businesses, and platforms, understanding how these technologies work is essential for recognizing the risks they pose. Let’s dive deeper into the deepfake threats for creators and influencers.

What are deepfakes, and how are they made?

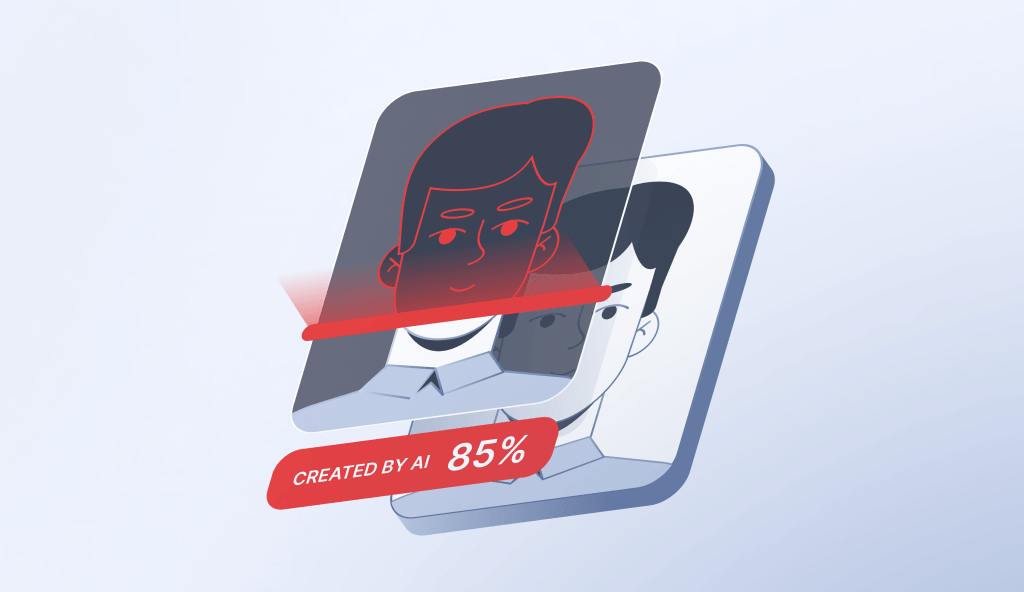

An AI deepfake relies on AI models trained on large datasets of images, videos, or audio. These models then analyze the datasets and learn to reproduce patterns, such as facial expressions or voices, so convincingly that the final result can be almost indistinguishable from reality. Deepfakes can also be made from scratch.

Although early deepfakes required technical expertise to create, modern tools have become far more accessible, allowing users to easily generate AI face swaps, where a person’s face is digitally replaced with someone else’s. Many face-swap AI tools rely on neural networks that map facial features and expressions from one individual onto another while preserving the context of the original. AI tools capable of generating deepfakes will only get more sophisticated as the AI field progresses.

AI voice cloning: The invisible threat

While manipulated video may receive more attention, AI voice cloning is just as serious a threat to creators. Voice cloning systems analyze recordings of a person speaking and generate synthetic speech that mimics their tone, accent, and speaking patterns.

Research from McAfee found that one in four people have experienced or know someone targeted by an AI voice cloning scam, highlighting how quickly the technology is spreading into everyday fraud.

In these scams, attackers may create audio messages that appear to come from a trusted individual, such as a well-known creator, company representative, or even a family member. These recordings may be used to persuade victims to send money, share sensitive information, or participate in fraud.

Voice cloning fraud can be difficult to detect. Even short audio samples from podcasts, livestreams, or any video available online may provide enough material for fraudsters to generate convincing cloned voices.

Face swap vs. full body synthesis: Key differences

AI face swap methods tend to focus on replacing a person’s face within existing footage. These tools are widely available and require relatively little training data.

Full-body synthesis is a more advanced form of synthetic media in which systems generate entirely new deepfake video content that not only recreates a person’s face but also their body movements and gestures within a simulated environment. This can allow attackers to fabricate scenes that never happened, such as a creator appearing to promote a product.

How deepfake fraud targets creators: The most frequent—and dangerous—AI scams

In a social media context, highly realistic influencer audio or video that appears authentic makes it easy to spread fraudulent content quickly and at scale, exposing victims to financial and reputational damage and, of course, causing financial and psychological harm among their loyal audience.

Identity theft and impersonation scams

In a deepfake identity theft, attackers use AI tools to replicate a person’s appearance or voice without their permission. These may be used in AI impersonation campaigns designed to deceive audiences.

Because the content appears to feature a familiar personality, victims may be less likely to question its authenticity. This makes deepfake scams particularly effective on platforms where trust between creators and audiences plays a central role.

MrBeast’s identity, for example, was stolen to sell $2 iPhones, exposing him to reputational risk.

Fake sponsorship and brand deal fraud

Deepfake technology can also enable influencer fraud involving fake brand partnerships or sponsorship opportunities. In these deepfake scams, attackers can either impersonate the creator or a brand to manipulate business relationships.

Fraudsters may contact brands while pretending to be a creator, offering promotional opportunities in exchange for payments. Alternatively, attackers may impersonate a brand representative and approach creators with fake sponsorship deals that are actually phishing campaigns.

Unauthorized monetization of synthetic content

Another key concern in the creator economy is the unauthorized use of synthetic media to monetize AI-deepfaked content generated by fraudsters.

This type of deepfake fraud allows the bad actor to create entirely fictional videos that appear to feature the creator and make money, all without the victim possibly even knowing.

In one widely reported case in India, a man used AI tools to generate explicit deepfake images of a real woman and build a fake online persona using her identity. The account attracted more than a million followers and generated revenue through subscription-based content before authorities arrested the man.

Beyond financial harm, these practices can also damage a creator’s reputation and undermine their control over their own digital identity.

Recent analyses of deepfake ecosystems suggest deepfakes are heavily concentrated in sexualized content. One study of nearly 96,000 deepfake videos found that about 98% were pornographic, with 99% targeting women.

AI-generated fan scams and social engineering

Deepfakes are also used in social engineering attacks that may target fans and followers. In these scams, attackers may combine AI impersonation with social engineering strategies designed to exploit trust.

Scammers may steal photos and videos from real influencers to build convincing fake profiles used in so-called “pig-butchering” scams. Victims may be persuaded to invest in fraudulent cryptocurrency platforms while believing they are communicating with a trusted personality.

A fraudulent account may distribute deepfake social media content in which a creator appears to invite fans to exclusive groups, offer investment opportunities, or promote urgent charitable appeals. Followers who believe the message is genuine may be persuaded to send money, share personal information, or click on malicious links.

Suggested read: The Major Digital Trust Trends Shaping Asia in 2026

Non-consensual explicit deepfakes and image-based abuse

One of the most harmful uses of synthetic media is the creation of non-consensual explicit deepfakes, including AI-generated nudes and deepfake pornography. These materials are often produced using publicly available photos of celebrities, influencers, or private individuals and distributed without the subject’s consent.

In some cases, these images are used for monetization scams, where attackers distribute AI-generated explicit content to attract traffic, sell subscriptions, or impersonate creators on adult platforms. Victims may experience reputational damage, harassment, and significant psychological harm when manipulated images spread widely online.

Anyone can be a victim. In January 2024, explicit AI-generated images depicting Taylor Swift circulated widely online. The incident triggered global outrage and renewed calls for stronger protections against deepfake image-based abuse.

As generative AI tools become easier to access, non-consensual deepfake pornography may become one of the most widespread forms of synthetic media abuse, increasing pressure on platforms and regulators to improve detection and removal mechanisms.

A high-profile example of this issue is the controversy surrounding the Grok chatbot on the X platform. Users discovered they could upload photos of real people and ask the system to change them into revealing clothing or sexualized poses. Researchers later estimated that around 3 million sexualized images were generated by Grok in just 11 days.

The incident triggered global backlash and regulatory scrutiny. Authorities, including the European Commission, opened investigations into whether Elon Musk’s X had failed to prevent the spread of illegal or harmful content under digital safety laws. Just recently, X accepted a €120 million EU fine for breaching transparency rules under the Digital Services Act.

Concerns about abusive uses of synthetic media have also been raised within the entertainment industry. Actor Mara Wilson warned that young performers could be vulnerable to AI-generated exploitation, describing the growing risk of a “deepfake apocalypse” as synthetic media tools become more accessible.

Deepfake identity theft is routinely used to humiliate creators. In 2026, influencer Ashley St. Clair filed a lawsuit against xAI, alleging that Grok had generated sexually explicit deepfake images of her without consent and distributed them across the X platform.

High-profile cases like this often draw public attention, while less established creators and private individuals may face even greater risks. Unlike celebrities, they typically lack access to legal support, platform escalation channels, or reputation management resources. Cases such as the targeting of schoolgirls in Almendralejo, Spain, where AI-generated nude images were created from social media photos, and incidents involving teachers subjected to synthetic explicit content, demonstrate that deepfake abuse is no longer limited to public figures.

For these individuals, non-consensual deepfakes can lead to reputational damage and sustained harassment, professional consequences, and significant psychological harm—often with limited avenues for recourse.

How to detect deepfakes: Tools, techniques, and red flags

While advances in the AI field have made synthetic media more realistic and deepfake fraud more sophisticated, AI-powered fraud detection solutions can help identify suspicious content.

❗Deepfake detection requires human awareness and equally sophisticated tools that can analyze media for subtle inconsistencies.

Learning how to detect deepfakes is increasingly important to limit reputational damage and prevent fraud.

When trying to detect deepfake content manually, common visual warning signs include:

- Distorted backgrounds that shift unnaturally

- Irregular blinking patterns

- Mismatched lip movements

- Glitches around the hairline, jawline, or ears, where models may often struggle

In 2026, we should not believe what we see. Relying on the human eye is becoming increasingly futile. Modern deepfake detection tools now analyze micro-signals, making it easier to identify increasingly sophisticated manipulation.

❗However, deepfake detectors alone are not sufficient. These models are typically trained on past data, meaning they learn to detect the characteristics of earlier-generation deepfakes. As AI rapidly evolves, newer models eliminate those telltale flaws, leaving existing detectors one step behind.

In other words, deepfake detection is inherently reactive. The fight against deepfakes, therefore, requires a more holistic approach—one that combines detection with additional safeguards such as content provenance, watermarking, and broader ecosystem-level controls to clearly indicate when media is synthetic.

❗Crucially, it largely depends on human attentiveness, as individuals and their willingness to verify information remain the final line of defense against increasingly convincing digital deception.

Audio manipulation signals include:

- Unnatural rhythm or pacing

- Emotionless or overly flat delivery that lacks normal human variation

- Abrupt shifts in tone or accent

- Metallic or robotic sound quality

Effective deepfake audio detection may use AI systems that analyze hidden acoustic features, such as frequency anomalies and biometric voice patterns.

Best AI deepfake detection tools in 2026

As deepfake technology evolves, deepfake detection tools are becoming crucial for screening digital media for signs of manipulation. These systems use machine learning models designed to identify artifacts invisible to the human eye.

A deepfake detector may analyze pixel-level inconsistencies, facial micro-expressions, and blood flow, audio frequency patterns, or irregular motion dynamics within a video to flag potentially manipulated content for further review.

Combining media analysis with AI fraud detection systems can help organizations identify signs of deepfake fraud earlier in the attack cycle.

Free vs. paid deepfake detectors: Comparison

While both free and paid enterprise-quality deepfake detection tools aim to identify manipulated media, they are designed for different use cases and levels of risk exposure. Free deepfake detectors, for example, are typically for individual users, journalists, or researchers who want to analyze a single piece of media. They often work through simple upload interfaces that scan for visual or audio artifacts associated with synthetic generation.

Enterprise-level systems, by contrast, are built for organizations that need to analyze large volumes of media or protect users from fraud at scale. These solutions may also integrate with moderation pipelines, identity verification systems, or fraud detection platforms.

How platforms detect and flag synthetic media

Platforms like YouTube increasingly use automated systems to detect and manage synthetic media. Systems may combine deepfake detection with behavioral monitoring designed to identify suspicious patterns of activity.

As deepfake content becomes more pervasive on social media, some platforms have also introduced deepfake policies governing the use of AI-generated media, which may require disclosure when synthetic content is used or restrict synthetic impersonation. Disclosure of synthetic material will soon also be needed for regulatory compliance in some jurisdictions, including the EU.

Suggested read: Bypassing Facial Recognition—How to Detect Deepfakes and Other Fraud

Protecting creator identity from AI manipulation

AI impersonation tools can replicate a person’s appearance or voice with increasing realism, making it easier for fraudsters to create misleading content or impersonate creators online.

In response, many organizations are exploring technologies designed to strengthen deepfake protections. Solutions such as digital watermarking, identity verification, and AI content authentication are emerging as tools in protecting creators and maintaining trust in online media.

Digital watermarking and content authentication

Creators may use digital watermarking technology to add hidden markers or metadata that identify the source of an image, video, or audio recording and could be integrated into media creation workflows. Watermarking systems can help identify whether content has been modified or generated using AI tools.

When combined with content authentication systems, these markers can help confirm whether a piece of media has been altered or generated using AI. Some emerging AI content authentication frameworks, like C2PA, allow platforms to track how content has been created or edited over time, providing a record of authenticity.

For creators, these technologies can serve as a form of provenance and help platforms and audiences distinguish genuine content from deepfakes.

Content authentication frameworks may also use cryptographic signatures, metadata tracking, or data provenance records to confirm when and how content was produced.

Creator identity protection best practices

Beyond technical and legal measures, creators can adopt practical strategies to strengthen deepfake protection and reduce the risk of impersonation:

💡Increase deepfake awareness within creator communities: Educating creators, platforms, and audiences about how synthetic media is used in scams helps improve detection and reporting of suspicious content.

💡Educate audiences about impersonation risks: Creators can proactively inform their followers about common scam tactics, including AI-generated content and fraudulent promotions, so they can better recognize warning signs.

💡Encourage active reporting of suspicious content: Building a culture of vigilance helps platforms and communities respond faster to emerging threats.

💡Leverage content authentication technologies: Use tools that verify the origin and integrity of digital content to help distinguish real media from manipulated or synthetic content.

💡Support stronger moderation practices: Work with platforms that invest in advanced moderation systems to detect and remove deepfake-driven fraud at scale.

Suggested read: Fraud Is in the Air: The Growing Threat of Online Romance Scams

Deepfake regulation and platform policies: How governments and social media are tackling AI impersonation

As the impacts of deepfake fraud and synthetic media become more noticeable, both technology platforms and regulators are taking steps to address the risks. Social networks host vast amounts of user-generated content, making them key battlegrounds in the fight against AI impersonation.

Many platforms have introduced deepfake policy frameworks designed to reduce misleading content and protect users from fraud. Governments are developing deepfake laws and broader deepfake legislation aimed at regulating the use of artificial intelligence. Together, these measures reflect a growing recognition that the spread of potentially harmful deepfake social media content requires oversight from both private platforms and public authorities.

The Grok controversy also accelerated regulatory pressure on social platforms. Authorities in multiple countries, such as Indonesia and Malaysia, temporarily blocked access to the tool while authorities such as the EU and UK assessed whether it violated digital safety laws.

AI deepfake laws and legislation by country

Alongside platform policies, governments around the world are developing deepfake legislation to address synthetic media. Emerging deepfake laws typically focus on preventing deceptive impersonation, protecting individuals’ likeness, and preventing harmful uses of AI-generated content.

For example, the European Union’s Artificial Intelligence Act includes transparency requirements for synthetic media. Under Article 50, AI-generated or manipulated content like deepfakes needs to be clearly disclosed. The Act is intended to ensure AI applications that can affect the lives of those residing in the EU are “safe, transparent, traceable, non-discriminatory and environmentally friendly.”

China has also introduced regulations governing deep synthesis technologies. These rules require providers of AI systems capable of generating synthetic media to label manipulated content, verify the identities of users, obey the law, and obtain consent when a person’s likeness is used.

DMCA, right of publicity, and the creator

In addition to emerging deepfake laws, creators may also rely on existing legal frameworks to protect their likeness and content. For example, the right of publicity recognized in around half of the US states technically allows individuals to control how their name, image, or identity is used for commercial purposes. The proposed NO FAKES Act seeks to recognize this at a federal level, but has not been enacted.

If synthetic media uses a creator’s likeness without permission, the right of publicity may provide a legal basis for requesting removal or pursuing legal action, depending on the jurisdiction.

Creators may also rely on copyright-based mechanisms such as takedown procedures under the Digital Millennium Copyright Act (DMCA) when their original content is used to produce or distribute manipulated media.

Together, these legal tools, combined with evolving deepfake legislation, are becoming important for creators seeking protection against fraudulent impersonation in the age of synthetic media and the AI trust crisis.

Suggested read: Comprehensive Guide to AI Laws and Regulations Worldwide (2026)

How to register and protect likeness legally

Legal protections are also evolving to address the growing risks associated with deepfake technology. The right of publicity, for example, is recognized in law in about half of the US states and grants people control over the commercial use of their name, image, or likeness as a potential form of deepfake protection. Unlike trademarks or copyrights, the right of publicity usually arises automatically under state law rather than through a formal registration process.

However, creators can still strengthen protection of their identity through several legal mechanisms. For example, registering a trademark for a creator’s brand name, logo, or stage name can make it easier to issue takedown requests if they are used to create deepfakes. Copyright protections may also apply to original content, such as photos and videos, giving creators additional grounds to challenge unauthorized reuse or manipulation.

Creators can also rely on contractual protections, particularly when working with agencies, platforms, or brand partners. Including clauses that restrict the use of their likeness, voice, or image in AI training or synthetic media generation can help prevent misuse at the source.

Enforcement mechanisms are equally important. Creators can use notice-and-takedown procedures on platforms, as well as legal tools such as cease-and-desist letters, to remove infringing or misleading content. In some jurisdictions, they may also pursue claims related to defamation, fraud, or misrepresentation, depending on how the deepfake is used.

Many jurisdictions have also enacted deepfake laws, including measures targeting non-consensual synthetic media, deceptive political deepfakes, and unauthorized use of a person’s likeness. Data protection and privacy laws, such as those governing biometric data, may also apply where facial or voice data is used without consent.

While deepfake legislation varies across countries, these protections can provide creators with avenues to challenge unauthorized use of their likeness, seek removal of harmful content, and, in some cases, pursue damages.

Social media deepfake policies: What creators need to know

Most major social platforms—including YouTube, TikTok, Meta, and X—have introduced policies governing the use of manipulated or AI-generated media, often requiring disclosure or restricting deceptive synthetic impersonation. These deepfake policies generally focus on preventing deceptive impersonation, election interference, and harmful or misleading content.

In the European Union, the Digital Services Act (DSA) also strengthens platform responsibilities for moderating harmful online content. While the regulation does not specifically define deepfakes, it introduces transparency requirements and “notice and action” mechanisms that allow users to report illegal or misleading content, which platforms must review and potentially remove.

For creators, this means platforms may remove or restrict harmful deepfake social media posts. In some cases, AI-generated content must be clearly labeled so audiences understand that the media is synthetic rather than authentic.

Building deepfake awareness and understanding platform rules can help reduce the risk of deepfake fraud spreading within creator communities, and help know what to do in case of impersonation scams.

The future of AI fraud in the creator economy

As AI tools capable of creating deepfakes become more advanced and accessible, the creator economy is likely to face more impersonation, manipulation, and fraud driven by increasingly realistic synthetic media. However, organizations are investing heavily in AI-powered fraud detection technologies designed to counter these new threats.

Emerging AI threats: What’s coming next?

One of the most significant challenges will be the increased realism of deepfakes. People already struggle to spot AI-generated content, but advances in generative models will lead to the rise of synthetic videos, voices, and images that will be potentially impossible for human viewers to distinguish from reality. This has already triggered an unprecedented crisis of trust, as well as a surge in fraud.

This means even more sophisticated AI impersonation campaigns targeting creators and their audiences will follow. There will also likely be new types of deepfake scams, including coordinated social engineering campaigns that use synthetic media to build credibility and manipulate trust, and the use of agentic AI to execute scams at a never-before-seen scale.

Beyond this, emerging risks include real-time deepfake impersonation during live interactions, the combination of account takeovers with synthetic media to increase credibility, and the rise of persistent AI-generated “clones” capable of mimicking creators over time. These developments could significantly undermine audience trust, making it harder for followers to distinguish authentic content from manipulated media. As a result, the creator economy may increasingly rely on new forms of identity verification, content authentication, and platform-level safeguards to maintain trust.

Next-generation detection and authentication technologies

Advances in AI content authentication help platforms analyze large volumes of media for subtle signals associated with synthetic generation. By combining detection algorithms with platform-level safeguards, these technologies can be a line of defense against deepfake-driven fraud in the creator economy.

Suggested read: More Sophisticated—and More AI-Driven—Than Ever: Top Identity Fraud Trends to Watch in 2026

Conclusion: How businesses and creators can stay ahead of the deepfake threat

The scale of synthetic media abuse can escalate rapidly when safeguards fail. During the Grok controversy, researchers estimated that the system produced sexualized images roughly every 41 seconds, demonstrating how quickly AI tools can be misused at scale and why stronger deepfake protection measures are becoming increasingly important across digital platforms.

Researchers are also constantly moving to stay ahead of how attackers try to bypass identity checks using synthetic media. For example, Sumsub’s machine learning team recently tested whether a deepfake of a Stranger Things character could pass a liveness check to highlight the importance of up-to-date verification systems.

AI-powered deepfake detectors, such as Sumsub’s Liveness Detection, can outperform humans in spotting enhanced photos. In October 2023, Sumsub also released “For Fake’s Sake”, a set of machine learning-driven models that enable the detection of deepfakes and synthetic fraud. This tool is available for free download and use by all.

While platforms and regulators are beginning to address the risks of synthetic media, creators cannot rely on these safeguards alone. Tools such as platform reporting mechanisms, copyright-based takedowns, rights related to the use of one’s likeness, and emerging measures like watermarking and content provenance provide important protection. However, their effectiveness depends on speed, awareness, and consistent implementation across platforms and jurisdictions. As deepfake technology evolves, creators must take a more active role in protecting their digital identity—by understanding platform policies, using available legal frameworks, and educating their audiences.

Staying ahead of the deepfake threat will require collaboration between technology providers, platforms, regulators, and creators. By combining strong verification systems, advanced detection technologies, and clearer signaling of synthetic content, businesses can strengthen protection and maintain trust across the creator economy. Ultimately, trust will depend not only on better technology and regulation, but also on more informed and vigilant creators and audiences.

AI deepfakes FAQ

-

What are AI deepfakes?

According to the EU AI Act, a “deep fake” refers to AI-generated or manipulated image, audio, or video content that resembles existing persons, objects, places, entities, or events and would falsely appear to a person to be authentic or truthful.

-

How do we stay protected against AI deepfakes?

Protection against AI deepfakes involves a combination of deepfake detection tools and heightened awareness. Increasing awareness of deepfakes and monitoring for suspicious content can help creators and platforms detect impersonation scams earlier.

-

Can deepfakes of influencers be used for scams?

Yes—fraudsters use AI-generated videos or voices to impersonate influencers and promote fake investments, giveaways, or products.

-

How can you tell if an influencer video is a deepfake?

Check for unusual behavior, verify through official channels, and be cautious of urgent or suspicious requests.

-

What are the most common deepfake scams targeting influencers?

The most common deepfake scams targeting influencers and creators are fake investment promotions, giveaway scams, impersonation DMs, and AI-generated endorsements used to trick followers.

Relevant articles

- Article

- Mar 2, 2026

- 11 min read

Check out how AI deepfakes are evolving and discover proven strategies for detecting and preventing deepfake threats to protect your business.

- Article

- 1 week ago

- 10 min read

What is Sumsub anyway?

Not everyone loves compliance—but we do. Sumsub helps businesses verify users, prevent fraud, and meet regulatory requirements anywhere in the world, without compromises. From neobanks to mobility apps, we make sure honest users get in, and bad actors stay out.